There’s a common assumption that the engineers who’ll thrive in an AI-assisted world will be the fastest typists, the deepest technical specialists, the people who’ve been in the codebase the longest.

I think that assumption is wrong.

The people with the structural advantage are the ones who already know how to direct, evaluate, and course-correct the work of others. In other words: engineering leaders.

Why Managers Are Already Trained for This

Managing engineers is not that different from managing AI agents. Think about what a good engineering leader actually does:

- Defines clear outcomes instead of prescribing every line of implementation

- Reviews output for correctness and alignment with goals

- Identifies edge cases and risks before they become problems

- Iterates based on feedback, not ego

- Optimizes for delivery, not perfection

- Knows when “good enough” is genuinely good enough, and acts on that judgment

That’s also exactly how effective AI-assisted development works.

When I work with agents, I’m not writing every line. I’m setting constraints, defining architecture, evaluating trade-offs, steering direction, and refactoring through feedback loops. In other words, I’m supervising the development of code rather than manually producing every character.

The key insight: engineering leaders are already trained to move work forward even when the output isn’t exactly how they would have written it. That mindset is rare. It’s also the primary skill that makes the difference between productive AI collaboration and frustrating AI babysitting.

AI doesn’t need you to be the fastest typist in the room. It needs you to think clearly, spot flaws quickly, and keep momentum. Those are leadership skills.

The Math Changes When Agents Run in Parallel

The real shift isn’t just working faster. It’s working differently.

A few weeks ago I ran two separate agent sessions simultaneously:

Session one: An agent scanning our production error database, identifying the fifty most common exceptions, creating properly structured Jira tickets for each one, and beginning remediation passes.

Session two: A separate agent helping implement a complex UI workflow in a new application, iterating on edge cases, tightening validation, handling the inevitable regressions.

Two terminals. One instance of Visual Studio. One human in the loop.

The output: what would normally take a team of eight to ten engineers a week to triage, spec, and begin executing, compressed into a single focused session.

This isn’t the same as working faster. It’s a different category of output. Parallel analysis plus parallel execution means the leverage isn’t linear anymore. When one engineer can operate with the coordination of a small team, a team of seven starts to rival organizations many times their size.

That forces some uncomfortable questions that most organizations haven’t started asking:

- How do we rethink team composition when individual leverage has changed this dramatically?

- What does sprint planning look like when one person can run multiple workstreams?

- What do we actually hire for now?

- What does “Definition of Done” mean when continuous refactoring is built into the workflow?

The companies that start asking (and answering) these questions now will have a significant advantage over those waiting for the technology to “stabilize” before adapting.

The Quality of Output Reflects the Quality of Leadership

Here’s what I’ve observed directly, building production software this way over several months: the quality of what AI generates has very little to do with the model and almost everything to do with the structure, expectations, and feedback wrapped around it.

The same model, given different inputs, produces dramatically different outputs. A vague directive produces vague code. A well-scoped, properly constrained, example-rich brief produces code that fits cleanly into the existing architecture, follows established patterns, and requires minimal correction.

That’s not an AI phenomenon. That’s a leadership phenomenon. It’s the same dynamic we see when experienced engineers give direction to junior developers. The quality of the mentorship determines the quality of the output, not the raw capability of the person being directed.

What made the difference in my experience wasn’t finding a clever AI trick. It was applying the same discipline I expect from high-performing teams:

- Scoping work intentionally before handing it to an agent

- Supplying concrete examples and constraints rather than hoping the model infers them

- Maintaining architecture and coding standards in writing, loaded at the start of every session

- Reviewing output critically and giving specific, actionable feedback rather than vague corrections

- Making design decisions at the right level: not delegating architectural choices to a model that has no stake in the outcome

These are table stakes for anyone who’s led a software team. They’re also, it turns out, exactly the inputs that make AI agents significantly more productive.

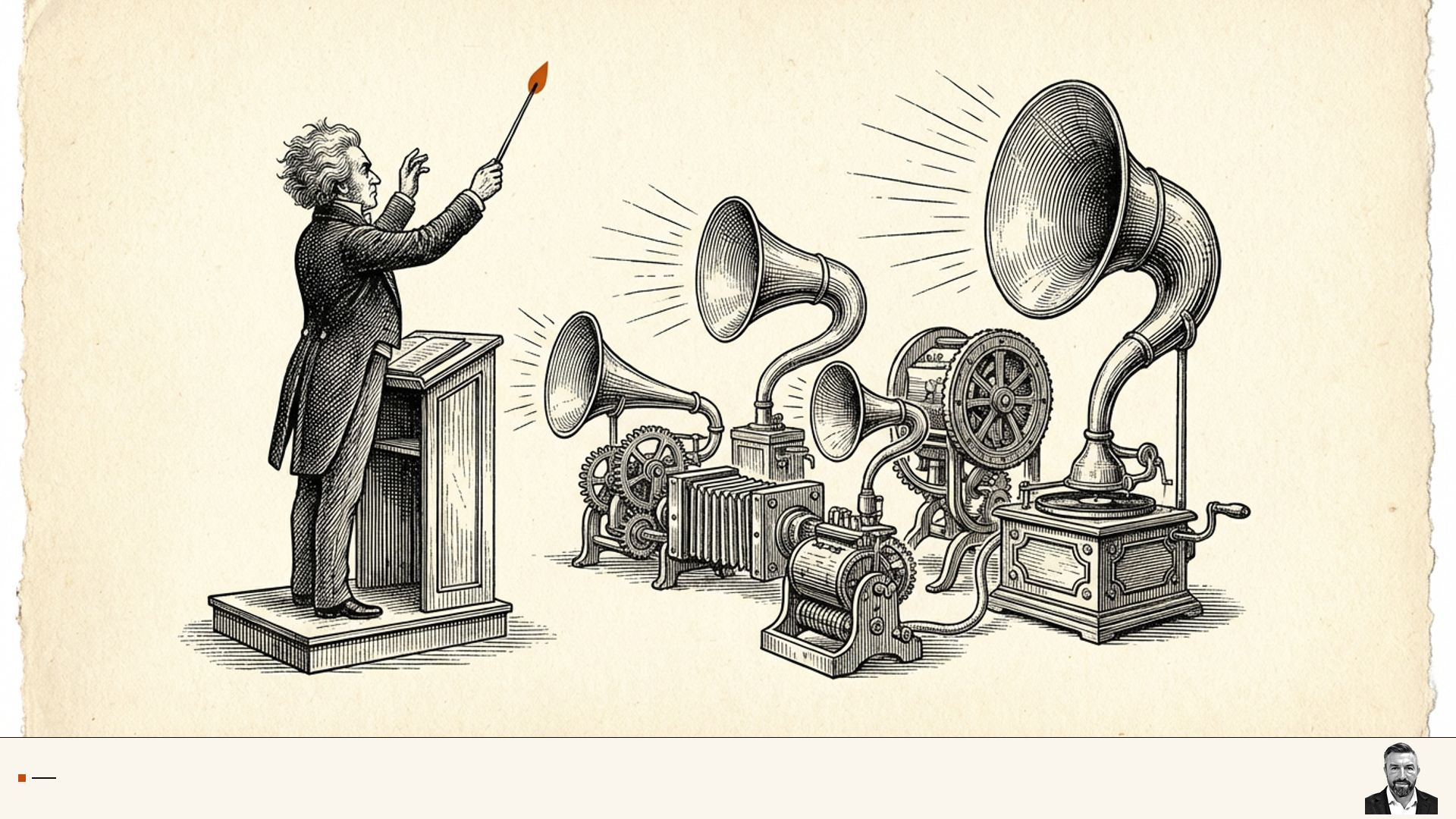

Orchestration Is the New Engineering Leadership

Agentic coding is less about hands-on implementation and more about orchestration. Orchestration has always been a management skill.

The future belongs to leaders who can direct intelligent systems with the same clarity and discipline they once brought to directing teams. Not because the technical skills no longer matter (they matter enormously, as context and judgment) but because the bottleneck has shifted.

The bottleneck used to be implementation speed. Teams were limited by how fast their engineers could produce working code.

That constraint is collapsing. The new bottleneck is clarity of intent, quality of standards, and speed of decision-making at the leadership layer.

AI amplifies what’s already there. If you have strong engineering leadership (clear thinking, high standards, fast feedback loops, good judgment about trade-offs) agents amplify that into disproportionate output.

If you have weak engineering leadership, agents amplify that too. The difference is just that the output comes faster and in larger quantities.

The leverage is real. The question is whether you’re ready to apply the leadership skills you already have to this new kind of team.